What is a string?

Strings are one of those basic constructs that are so important to communicating that many programming languages include them as a basic type.

What is a string? It is nothing more than a series of zero or more characters. For computers, characters are just symbolic representations of numbers. What is the difference between the letter `A` and the number `65`? To a computer nothing. The difference is how the value is interpreted by the higher level language. If a program labels the as an integer then it is the number `65`. If a program labels the value as a character then the value is interpreted as the letter `A`.

If we want to create a string then we need to represent it as a series of characters. So the string `Hello, World!` is a series of 13 characters that are put together to form a string.

8-bit ASCII

Once of the first standards for encoding characters was the ASCII character set. The ASCII table contains a set of values that can be represented in 8 bits. This is a standard used to ensure a standard way to exchange information. This ensures that when a computers stores the 8 bit representation of an `A` that all other computers would understand what that the value represents and not interpret is as different value.

UTF-16 A New Encoding

As computers spread throughout the world the need to be able to represent characters outside of the standard English alphabet became more important. New ways of encoding characters were created to enable this. One of these is the UTF-16 character encoding. This encodes characters in a 16-bit instead of 8-bits. This requires more memory per character but allows for a much richer set of characters to be encoded.

What is in a memory?

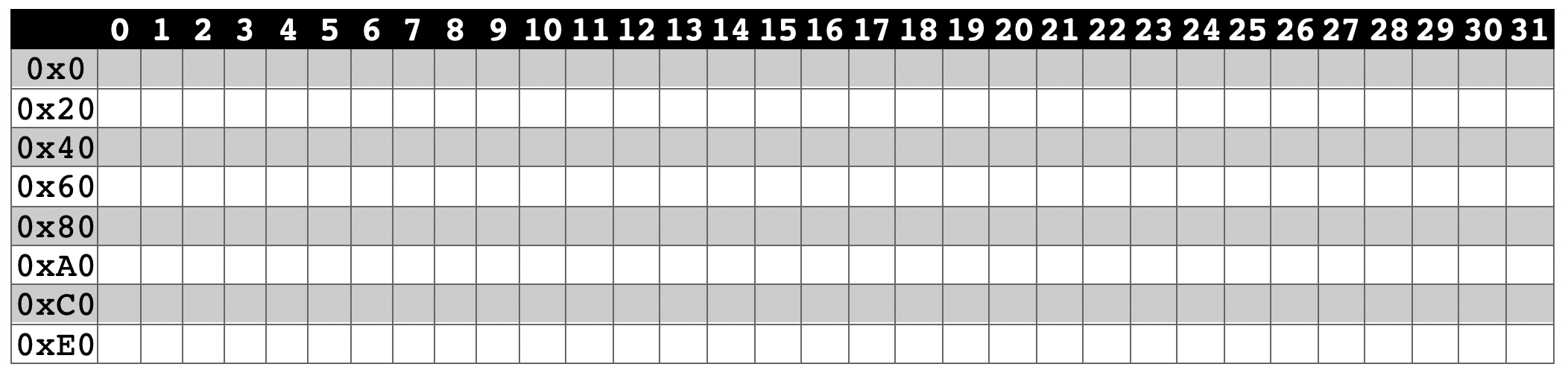

To better understand what a string is we need to understand how it is stored in a computer. First we will look at the architecture of a 32-bit computer. This means that every spot in memory has an address that can be represented by 32 bits. One way to think about this is a piece of graph paper.

Imagine a piece of graph paper that has a grid on it. The grid is 32 squares wide. The rows represent a single row in the memory. So if you have 256 bits of memory on a 32 bit architecture you would have 8 rows total.

There are a lot of different architectures out there for computers. The most common are 32-bit and 64-bit. However, there are plenty out there that have a different architecture. Additionally, how memory is addressed on each of the architectures depends on the implementation. For more information word sizes see this article

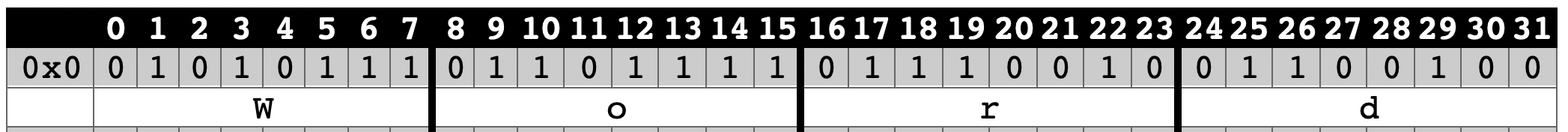

If we use an 8-bit representation to store and encode characters like in ASCII then we could represent a Word as four characters. Each of the characters are stored in a 1-byte space of memory.

If we start at the memory address of 0 (0x0) then we would have the character W at memory address 0 (0x0) followed by the character o at address 8 (0x8), the character r at address 16 (0x10) and finally the character d at address 24 (0x18).

Since computers store information in the form of 1's and 0's the characters are represented as binary numbers. Using the ASCII representation results in the letters represented as the following numbers.

| Character | Decimal | Hex | Binary |

|---|---|---|---|

| W | 87 | 56 | 0101 0111 |

| o | 111 | 6F | 0110 1111 |

| r | 114 | 72 | 0111 0010 |

| d | 100 | 64 | 0110 0100 |

If we put the characters into our memory space as a series of contiguous 8-bit (1-byte) numbers we get something that looks like this.

A String

A string is just a series of contiguous characters stored in memory. To store a string we need to know two things. The starting address of the first character and the size of the string or length of string. The length is just the number of characters in the string.

Now that we have a Word stored in memory we can see that the first character starts at address 0x0. This is where the starting address of a string would be indicated. The length of Word is 4. When we want to get the value of the string we return the address of the first character and each character that follows until we have reached the length of the string.

Conclusion.

That is a relatively basic explanation. The specifics on how a string is stored and read will depend on the architecture of the hardware that is being used. When writing in a programming language the manner in which you define the string will also be dependent upon the language. Some define a string as an array of characters. Others have a type that represents a string just like a type represents an integer.

You must be logged in to see the comments. Log in now!